The research group of Assistant Professor Wei Guo of the Institute for Sustainable Agro-Ecosystem Services in the Graduate School of Agricultural and Life Sciences at the University of Tokyo announced on June 15 that they had developed and released a Python package "EasyDCP" that automatically measures 3D data of potted plants with high accuracy based on images taken with a digital camera. In combination with a commercially available and open-source software, 3D traits of plants can be extracted at a low cost from images captured using a regular digital camera. They confirmed that it achieves the same level of accuracy as a laser scanner. The source code and test data of "Easy DCP" have been published on the website (https://zenodo.org/record/4756537#.YMgmkkxUvIV) and are available to the public. This tool is expected to be applied to various fields of plant science where measurements of 3D traits have not been used. Their findings were published in the international scientific journal Methods in Ecology and Evolution.

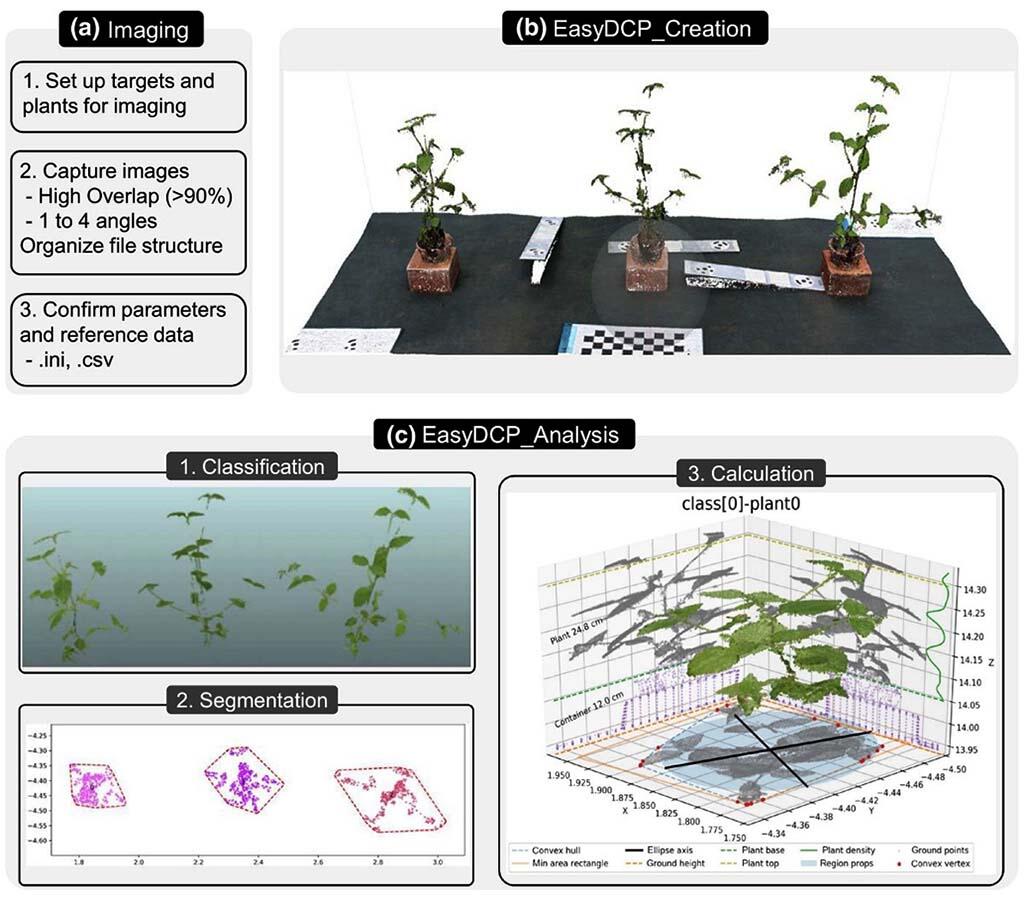

Measurements of phenotypic traits such as plant height, projected leaf area, and plant posture require large-scale dedicated facilities and expensive equipment, such as a laser scanner. To ease these requirements, the research group combined a regular digital camera with commercially available software (Agisoft Metashape) and open source software (EasyDCP_Creation and EasyDCP_Analysis) to create a program that functions optimally (pipelining). They developed a system that automatically measures the 3D traits of potted plants from captured images.

This system can quantitatively evaluate plant traits in three steps: (1) capturing images of a plant strain with a digital camera, (2) constructing a 3D point cloud from captured 2D images, and (3) analyzing 3D point cloud data and the features related to plan traits. Digital cameras, including a smartphone camera, can be used regardless of their specifications.

From there a 3D point cloud can be constructed from several photographs depending on the complexity of the shape of the target plant.

Credit: https://doi.org/10.1111/2041-210X.13645 licensed under CC BY 4.0

The research group cultured five kinds of plants (Amaranthus patulus, Commelina communis, Eleusine indica, Digitaria ciliaris, and Galinsoga quadriradiata) to evaluate the accuracy of "EasyDCP." They compared manually measured values of the height and leaf area of individual plants with those measured by a commercial laser scanner and "EasyDCP."

The projected leaf area measured by "Easy DCP" was comparable to that measured by the laser scanner (determination coefficients of 0.96 and 0.96 for Easy DCP and laser scanner, respectively), while the measurement of plant height was more accurate with "Easy DCP" (determination coefficients of 0.96 and 0.86 for Easy DCP and laser scanner, respectively). Plants of various sizes can be measured by simply capturing their images with a digital camera. Also, the system can be built at a low cost because the academic version of commercially available 3D point cloud construction software costs in the range of tens of thousands of yen ($100 USD ~). The newly developed system is expected to contribute to the quantitative evaluation of plant information as it extracts information from 3D data based on the characteristic green color of plants.

Assistant Professor Guo says, "In this study, we used commercially available software for 3D reconstruction, but we would like to update it with an open-source software in the next study. Also, some plants with complex shapes still cannot be successfully 3D reconstructed, and only basic quantitative evaluation was made in the measurements of this study. We are planning to develop an algorithm that can perform more complex quantitative evaluation, such as the number of tillers and leaves of plants with complex structures, by incorporating deep learning in the future."

This article has been translated by JST with permission from The Science News Ltd.(https://sci-news.co.jp/). Unauthorized reproduction of the article and photographs is prohibited.