Hisashi Ishihara, a lecturer at the Graduate School of Engineering, Osaka University, has proposed a new, rigorous method for evaluating the performance of android facial expressions from deformations in skin shape. He has shown the method can also indicate how much of the android's facial expression performance is being expressed in each facial region and how the expression is lacking compared to humans. Mr. Ishihara said, "The term facial expressiveness is often used for both people and androids, but it is only vaguely defined. The android facial performance evaluation index I have proposed is only for certain aspects of expressiveness that I have more clearly defined. I intend to steadily improve the performance of androids by proposing various numerical indicators of expressiveness while considering the characteristics of human and android faces that people perceive as highly expressive." His results were published in Advanced Robotics, the journal of the Robotics Society of Japan.

Provided by Hisashi Ishihara, Graduate School of Engineering, Osaka University.

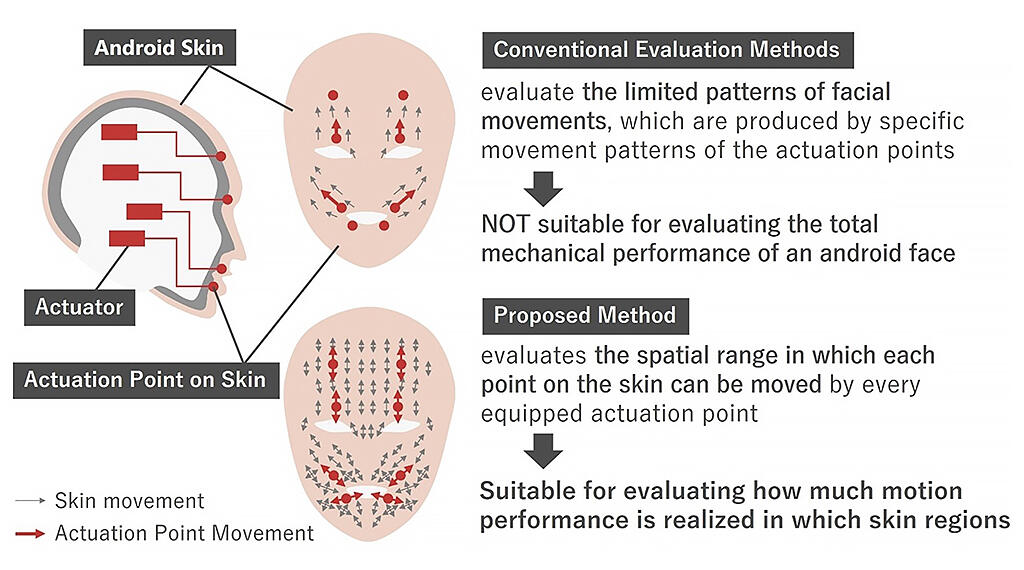

Previous evaluations of android faces were limited to specific movement patterns on the skin surface to express joy or sadness. These expressions are created by a combination of specific movements of the positions where the skin connects to the mechanical device (Actuators). However, these evaluations were insufficient for measuring the overall diverse movement of each skin position, which is seen in facial expressions.

However, Ishihara proposed a method of employing the spatial range in which the skin can move as a numerical index of expressiveness. He achieved this technique by using optical motion capture to precisely measure the many variations in 3D motion of parts of the skin that can occur when the actuation point of the skin is moved to its maximum extent.

In the index, the more strength the skin moves in a particular direction, the greater the emotional expressiveness in that area of the face. The index was applied to two recently developed androids and three adult males. Ishihara found that the three-dimensional expressivity of these androids was generally one order of magnitude lower in the upper part of the face and two to three orders of magnitude lower in the lower part compared to humans.

By limiting the index to the single direction of motion that moves most broadly in each skin section, the expressivity in the upper part of the face reached a level almost equivalent to that of humans. Low expressivity in the lower part was also confirmed to be only about one order of magnitude lower. This difference in expressivity can be easily assessed by producing an approximate octahedral representation of the range of skin motion.

In other words, to increase the expressive power of androids to match humans, improving the upper part of the face to allow movement in directions that were not previously possible would be effective. At the same time, improvements are needed to further increase the intensity of currently possible movements in the lower part of the face.

This article has been translated by JST with permission from The Science News Ltd.(https://sci-news.co.jp/). Unauthorized reproduction of the article and photographs is prohibited.