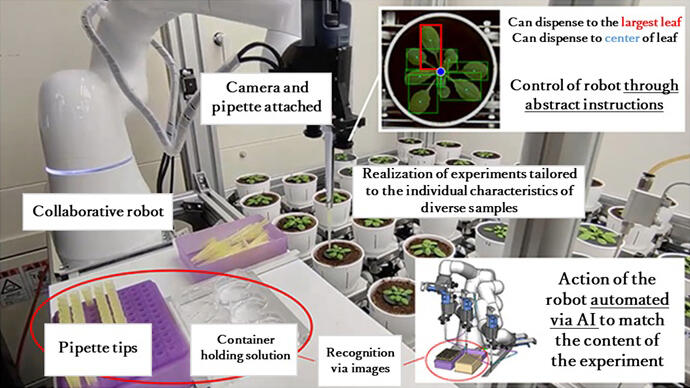

A research team from the RIKEN Center for Biosystems Dynamics Research made up of Senior Scientist Nobuyuki Tanaka of the Laboratory for Biologically Inspired Computing, and Senior Scientist Miki Fujita of the Mass Spectrometry and Microscopy Unit at the RIKEN Center for Sustainable Resource Science, and including Graduate Student Junbo Zhang, and Associate Professor Weiwei Wan from the Graduate School of Engineering Science at Osaka University announced the development of an AI system capable of recognizing non-standardized experimental environments and autonomously generating robot arm movements for conducting experiments. By combining a general-purpose collaborative robot arm and a 3D model reproduced on a computer, the team developed a generative AI that autonomously generates appropriate experimental operations. The team demonstrated that is possible to automate an experiment in which the shape of each individual plant is identified, and a solution is administered to their leaves under specific conditions. The actual device was released to the public on December 25.

The results are expected to lead to the automation of experiments involving ever-changing plants, cells, and compounds and were published in the November 27 issue of the international academic journal IEEE Transactions on Automation Science and Engineering.

Provided by RIKEN

Recent research into biological phenomena and material science requires the acquisition of data through numerous experiments and observations. The automation of these experiments using robots is expected to eliminate the influence of experimenters on the subjects. On the other hand, automating such tasks requires a customized experimental environment and a program tailored to each robot. It is also crucial to set exogenous parameters, such as reagent addition, based on the constantly changing size and shape of the targets, such as plants and cells. To address these challenges, the research team aimed to achieve autonomous experiments by giving robots and AI-based systems the flexibility to adapt to various experimental conditions.

As a model for autonomous experiments, the team focused on developing an AI system capable of measuring a small amount of liquid with a pipette and dispensing it onto a sample. The envisioned task included a series of operations, such as installing pipette tips, removing them after dispensing, and repeating the process.

First, a task analysis of the human experiment was conducted to identify the components of movements to be performed by the robot. To automate this task with robots, collaborative robots designed for coworking with humans in the same environment were employed. Two cameras and a micropipette were attached to the tip of the robot arm to observe the experimental environment. The pipette, commonly used in laboratories, had its surrounding parts 3D printed to enable operation by a robotic arm.

The collaborative robot is equipped with a ''direct teaching'' function that allows humans to teach the robot by physically demonstrating movements. Therefore, when a user teaches a movement, a camera captures the surrounding experimental environment, generating image data. AI is then employed to recognize the experimental setup from the image data. Additionally, 3D models of the experimental environment, including robot arms, micropipettes, and liquid containers, are reproduced on a computer by loading the 3D data of each instrument.

The system was designed to utilize AI for learning positional deviations, automatically calculating and compensating each time a camera detects such deviations. Wan and his colleagues utilized the independently developed WRS system for the AI. As a result, the robot autonomously identifies the positions of instruments and samples used in experiments, without the need for fixation, and corrects any misalignment each time. They successfully developed an AI robot system capable of executing complex experimental conditions using simple experimental protocols such as ''Drop 100 µL of solution 1 into sample A.''

Moreover, this system has been incorporated into RIKEN's proprietary automatic plant cultivation observation system RIPPS (RIKEN Integrated Plant Phenotyping System). The system can autonomously cultivate over 100 plant samples for more than one month under controlled growth conditions. As a result, it is now possible to give instructions such as "drop 20 µL of solution from container 1 onto the largest leaf of sample A," even if each of the many samples undergoes changes in shape and leaf size as it grows.

The group verified the system's performance by automating six patterns of comparative experimental protocols on 96 Arabidopsis plants: (1) selecting one of the three types of prepared reagents and (2) dispensing it onto the largest leaf or center of the plant. Despite four cases of erroneous recognition, the installation itself achieved a 100% successful rate. The system is also capable of recording data, including the actual drop location and time. Robots can perform tasks even in low-light conditions. Because the impact of human exhalation cannot be ignored when plant experiments are performed manually, automating the process is perceived as a significant advantage.

Tanaka stated, "We believe that this achievement, which can recognize non-standardized experimental environments and automatically generate robot motions, is a fundamental technology that can offer a method for collaboration between humans and robots in experiments. For example, we believe we can conduct experiments in fields like botany to explore the uniqueness of leaves or engage in periodic sampling, such as extracting chemicals in response to changes in their synthesis. We look forward to contributing to the acceleration of life science research."

This article has been translated by JST with permission from The Science News Ltd. (https://sci-news.co.jp/). Unauthorized reproduction of the article and photographs is prohibited.